- Vishakha Sadhwani

- Posts

- DevOps, MLOps, AIOps, LLMOps ~ What’s the Difference?

DevOps, MLOps, AIOps, LLMOps ~ What’s the Difference?

What to expect, how to upskill, projects and the roles each path leads to.

Hi Inner Circle!

Welcome to this week's edition.

DevOps, MLOps, AIOps, and LLMOps often get used interchangeably ~ but they solve very different problems.

Let’s unpack what each one focuses on and how the roles are evolving.

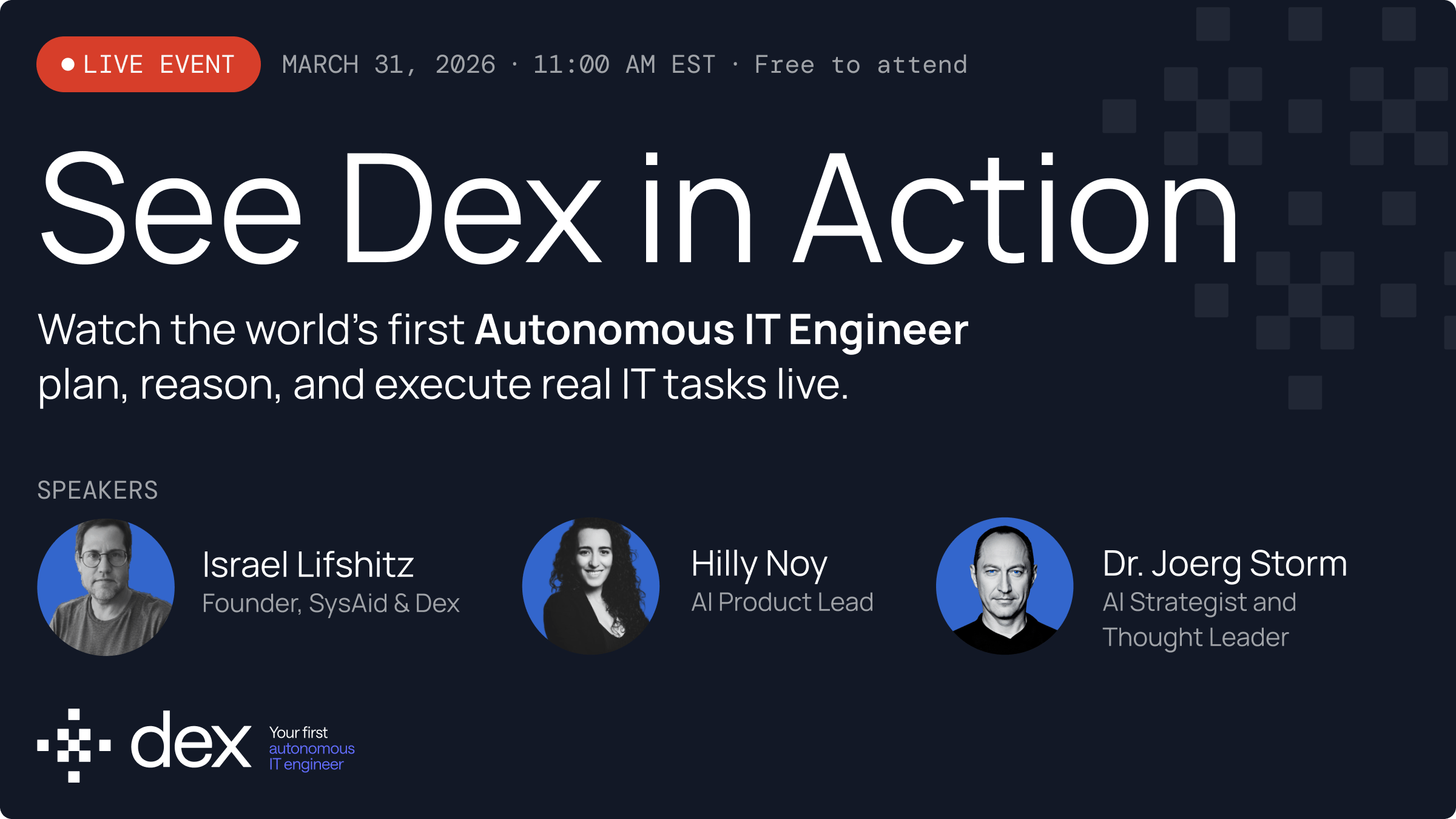

Before we begin… a quick thank you to today’s partner Dex.

Meet Dex ~ the world’s first Autonomous IT Engineer.

Dex doesn’t just assist IT teams. It investigates, plans, and executes real IT tasks with full autonomy.. resolving up to 90% of IT issues before they ever become a ticket.

The technology behind Dex is already trusted by 3,000+ organizations worldwide.

🔴 Live Event ~ March 31 · 11AM EST · Free

Watch Dex plan, reason, and execute real IT tasks live.

First-time attendees also get 3 months of free, unlimited access + a dedicated onboarding engineer.

DevOps, MLOps, AIOps, and LLMOps often get used interchangeably, but they actually solve very different problems in modern engineering systems. The easiest way to think about them is that:

| - DevOps focuses on delivering software, - MLOps focuses on operationalizing machine learning models, - AIOps uses AI to operate infrastructure, - LLMOps focuses on running production generative AI systems. “They share tooling and infrastructure layers, but the workflows, skillsets, and roles around them are quite different. Understanding these differences helps engineers figure out where they fit and how they might transition between these domains.” |

DevOps

DevOps is the foundation of modern software delivery.

It focuses on shipping code reliably using automation, CI/CD pipelines, infrastructure as code, and strong observability.

Typical workflows involve

- planning backlogs,

- managing code through Git,

- running CI builds,

- automating tests,

- releasing via CD pipelines,

- and monitoring systems through logs and metrics.

Most people transition into DevOps from software engineering, Linux/system administration, or cloud engineering. The core skills to study include Linux fundamentals, networking basics, containers (Docker), Kubernetes, CI/CD tools, Terraform, and observability stacks.

Common roles include DevOps Engineer, Platform Engineer, Infrastructure Engineer, and SRE. In many ways, DevOps remains the operational backbone that everything else in modern engineering builds on.

Check out this project to dive deeper.

MLOps

MLOps exists because machine learning systems behave differently from traditional software.

Instead of just deploying code, teams must: manage datasets, feature pipelines, experiments, and retraining cycles.

A typical MLOps lifecycle includes:

- defining the ML problem - what is this model going to do?

- collecting and versioning data,

- performing feature engineering, training models,

- deploying them to serve users, and monitoring for drift or performance degradation.

Engineers usually transition into MLOps from data science or DevOps backgrounds. Data scientists learn production systems, while DevOps engineers learn ML workflows.

Key areas to study include ML fundamentals, feature stores, experiment tracking, ML pipelines, and platforms like MLflow, Kubeflow, or Vertex AI.

Typical roles include MLOps Engineer, ML Engineer, and ML Platform Engineer.

Explore this project to dive deeper (free).

Or follow this course for a structured learning path (paid)

AIOps

AIOps applies machine learning to operate large-scale IT systems. Modern infrastructure generates massive volumes of telemetry like logs, metrics, alerts, and traces ~ and AIOps platforms use ML to analyze this data, detect anomalies, correlate incidents, and trigger automated remediation.

Instead of engineers manually analyzing alerts, AIOps systems can:

- identify abnormal behavior,

- connect related signals,

- and initiate actions like rollback or scaling adjustments.

Most professionals moving into AIOps come from SRE, DevOps, or observability engineering roles.

Important areas to study include observability platforms, anomaly detection, LLM Fundamentals, time-series analysis, and monitoring ecosystems like Datadog, Splunk, or Elastic.

Typical roles include AIOps Engineer, Observability Engineer, and Reliability Platform Engineer.

If you’d like to dive deeper, this project is a great place to start.

LLMOps

LLMOps is the newest (not so new though) operational discipline, emerging with large language models and generative AI systems. Unlike traditional ML pipelines, LLM systems rely heavily on prompts, retrieval pipelines, vector databases, guardrails, and evaluation frameworks.

The lifecycle usually includes defining the AI use case:

- curating knowledge sources (external & internal),

- designing prompt templates,

- selecting models,

- implementing guardrails and evaluations,

- deploying inference systems,

- and monitoring hallucinations or latency.

Engineers often transition into LLMOps from MLOps, DevOps, or AI engineering roles.

Key topics to study include transformers, embeddings, vector databases, RAG architectures, prompt engineering, and GPU inference infrastructure.

Common roles include AI Engineer, Generative AI Engineer, and LLM Platform Engineer.

This video walks you through the full project, along with the key concepts behind it. Also here’s the code repository that you can review.

That’s it for this week.

Pick one concept, practice it, and extend it further.

Keep showing up, keep upskilling.

See you in the next one.

-V